Chapter 2 URSSI Conceptualization

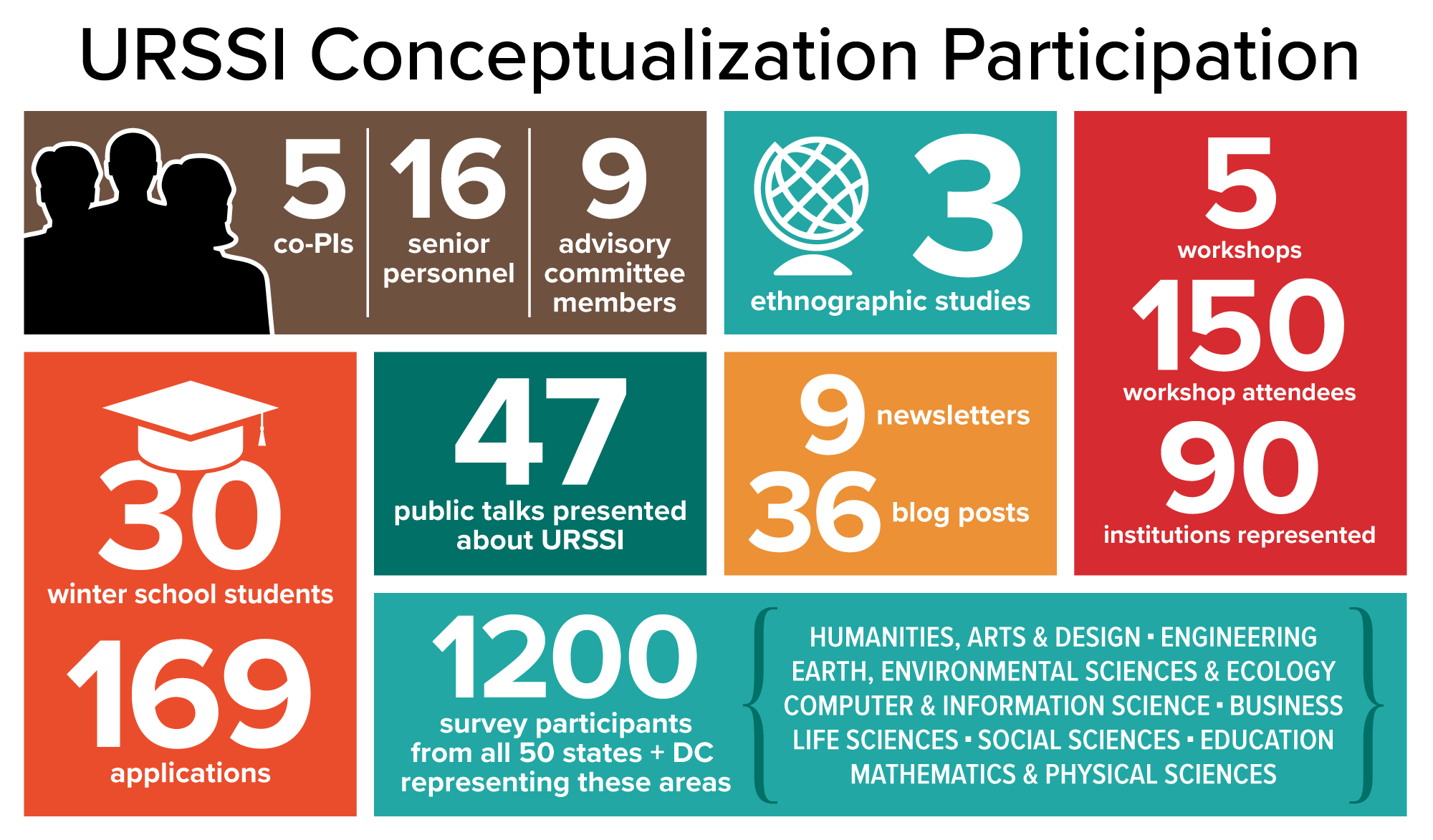

The purpose of this conceptualization project was to create a roadmap for a US Research Software Sustainability Institute (URSSI). The roadmap was to be informed by and responsive to a community of potential stakeholders, including researchers, software developers and users, funders, and program / product managers. To engage this diverse group of people and institutions we completed the research described in this section including a series of workshops, community outreach and communication, a survey, set of ethnographic studies, and a pilot Winter School for early-career researchers. At the conclusion of this section we offer a summary of the challenges identified, collectively, across all of the URSSI conceptualization activities and what role we believe URSSI should play in addressing these challenges.

2.1 Workshops

Community wide workshops: In 2018 we held two community workshops with URSSI stakeholders in Berkeley (April) and Chicago (October). We invited participants based on their ability to represent diverse roles, institutions, and perspectives on research software. The general goal of the two community workshops was to determine which topics this diverse group of stakeholders consider to be well-understood, which topics still have uncertainty or a need for guidance, and what work remains to be done in supporting sustainable research software. URSSI PIs facilitated broad discussions and participated in small group breakout discussions. Additionally, participants at each workshop gave presentations about their on-going research software activities.

Thematic Workshops: Participants at the first community workshop identified two topics worthy of more focused discussion and community input. We organized the following workshops to better understand those topics:

The Metrics, Credit and Citation Workshop, held in Santa Barbara, California in January 2019, focused on reseach software metrics, citation, and impact evaluation. The 23 participants had strong expertise in the various workshop themes, including the PIs of the CodeMeta project, Zenodo, and software credit initiatives such as SourceCred.

The Research Incubators Workshop, held in College Park, Maryland in February 2019, focused on methods for incubating new and existing research projects. The 20 participants had strong expertise in research software project development, including program officers from various government agencies, open-source research software developers, and organizational scholars that have studied research software processes.

URSSI Design Workshop: In April 2019 members of the Senior Personnel and Advisory Committees participated in a workshop in Chicago, IL. PIs presented preliminary results from our research (ethnographies and survey) as well as lessons learned from the four previously held URSSI workshops.

2.3 Survey

We developed and disseminated a survey to compliment workshop participation. The primary goal of the survey was to gather the opinions, preferences, and self-reported activities of the research software community regarding development practices, development tools, training, funding/institutional support, career paths, credit for software work, and diversity/inclusion. For each of these topics, we asked a small number of general questions and then allowed participants to self-select to answer more detailed questions about any area of particular interest. We distributed the survey to PIs of currently funded NSF and NIH projects and to relevant mailing lists. The survey closed in May 2019 after receiving approximately 1200 responses.

The results of the survey highlighted some areas where URSSI could play a key role in advancing the sustainability of research software in the United States.

There was a mismatch between how respondents wanted to allocate their time and their actual allocation of time. This result provides an opportunity for URSSI to work with developers and teams to help them focus their efforts on the tasks that are most relevant.

The aspects of the software development process respondents viewed as being more difficult than they should be tended to be people-related activities rather than technical activities. This result suggests that URSSI could support teams and developers by providing training and/or resources related to human factors in the software development process.

Respondents indicated they use a number of software development practices. However, one key practice that was underutilized is peer code review. URSSI can provide training on peer code review and work with teams to ensure that the infrastructure is in place to appropriately support this activity in the research software space.

The respondents indicated that software development practices including requirements, design, maintenance, and documentation were not well-supported by tools.

In terms of version control, there is still a sizable percentage of people who use methods like copying files to another location and zip file backups as version control. This result suggests that URSSI could provide additional training and tools to help teams use modern version control systems.

Many of the items above relate to training, or the lack thereof. The survey results suggest a large percentage of respondents have not received training in software development. While the respondents indicated there were sufficient opportunities for training, most of them suggested they did not have sufficient time for training. URSSI could help by providing training in different formats that work better with the demands of the research software developers’ environments.

Many respondents also found the level of support, in terms of funding, to be inadequate to be successful. URSSI could help by advocating both at the national funding level as well as at the University level for increased funding for important research software development activities.

Respondents also indicated their software contributions were not significantly valued in performance reviews. URSSI could help by developing and advocating for policies that help research software developers get adequate recognition for their work.

Most projects lack a formal diversity plan. URSSI could help by providing template diversity plans and support for developing appropriate plans for individual projects.

2.4 Ethnography

To gain a deeper understanding of the practices and experiences of researchers who are actively engaged in software development, we have undertaken a series of ethnographic studies. These studies focused on software projects of varying size and complexity in the fields of hydrology, astronomy, and biochemistry. Using observations, semi-structured interviews, and a series of archival documents, we produced case studies of how, over time, these projects overcame challenges of recruiting contributors, building a governance model, seeking funding, and sharing credit in sustaining a software project that has demonstrable impact on a community of researchers.

We developed two of these studies, Astropy and Rosetta Commons, as full case studies. Both projects face unique sustainability challenges that they solved somewhat differently. While the main findings of this work are not novel in the sense that they will surprise anyone familiar with challenges to sustaining research software, the value of this work is in comparing the two cases. By better understanding common approaches to overcoming sustainability challenges, we believe there is a valuable opportunity to abstract these approaches into models of success that research software projects in other domains can modify or tailor. The following is a brief summary of the findings from these two case studies.

Points of comparison:

Distributed work coordination: Key to the success of both Astropy and Rosetta Commons is coordination of remote collaborative work. Astropy mirrors Python’s core development team in structuring contributor guidelines and supporting designated maintainers. Rosetta Commons differs substantially in that the project employs four full-time infrastructure maintainers. This offloading of maintenance responsibilities frees up contributors (distributed labs throughout the USA) to focus on conducting research and driving innovations that extend Rosetta’s key functionality.

Funding: Astropy is fiscally sponsored by NumFocus, but depends upon grant funding from a variety of sources to sustain its collective work. A recent grant from Gordon and Betty Moore Foundation, “Sustaining and Growing the Astropy Project,” is focused exclusively on maintenance and governance for the project’s long-term viability to practicing astronomers. Rosetta Commons combines licensing and grant funding to sustain its work. Licenses for commercial use and an NIH infrastructure maintenance grant have continuously supported the project since 2005.

Credit: Both projects have had a number of papers published about their development. Astropy suggests two publications to cite when acknowledging use of the software (???; ???). These two publications, combined, have over 3000 citations. Rosetta Commons, because it has many versions, methods, and language specific implementations, has no canonical citation. Interviews with contributors to Rosetta noted that this lack of canonical citation causes confusion for authors when rushing towards publication. Many researchers have sought a centralized source they could acknowledge. Despite the lack of a canonical citation, two papers describing Rosetta and its use in predicting protein structures have, combined, over 4300 citations (???; ???).

We observe differences in the two projects that seem marginal at first glance, but upon further analysis have important practical consequences for software development activities. For example, two substantial differences in the projects have consequences for the long-term sustainability of each project:

Astropy, in following an open model of contribution, focuses time and attention on clearly documenting and making contributor guidelines accessible to research software engineers. The maintenance team therefore focuses their effort on ensuring contributors are supported while simultaneously keeping various packages up to date and available to the community that depends upon this code for their research. This approach is partially a result of the software ecosystem being broadly useful to a discipline (Astronomy), as compared to Rosetta Commons, which focused on specific analytic tasks within a subfield of biological engineering (Macromolecular modelling).

Continuous annual grant funding and licensing fees allow Rosetta to centralize infrastructure tasks to a core team whose sole job is maintenance. This approach in turn encourages innovation and expansion of feature sets for labs that focus solely on producing new research insights. Astropy has, over time, centralized maintenance of the project’s software development and maintenance. But until very recently, this maintenance has been a volunteer activity. Shifting time, attention, and energy towards organizing an open-source model of development impacts career trajectories for practicing astronomers and contributes to a more fragile ecosystem for astronomy.

We recognize that software sustainability is more than just financial support, but what these case studies make clear is that the economic realities of maintaining and contributing to software development have important downstream impacts that shape innovation and engagement. The URSSI conceptualization has studied how these challenges can be practically overcome.

2.5 Winter School

In late December 2019 we ran our first ever URSSI school on research software engineering. We began accepting applications in July and received an overwhelming response to our call for applications. For the 30 participant slots available, we received 169 applications, meaning we had a challenging time selecting the participants and had to turn away a large number of interested researchers. While the selection committee used multiple criteria to evaluate and select participants, successful applicants already had some experience with Python programming, Git, and Unix skills, which was necessary to benefit from the workshop. Our goal was not to repeat the same material covered in bootcamps and Software Carpentry style workshops, but to focus on the research software engineering skills that not formally taught in any setting. These skills include best practices for packaging code as software, testing, collaborative software development, code review, and related topics such as licensing and archiving. The school lasted 2.5 days.

Based on the demand described above, this experience made evident the strong need for the skills covered in the school. The overall feedback from the school was also positive (more on that below). Some key lessons from this pilot that impact our design of a Summer School as part of URSSI are: 1) the school needs to be longer to allow for both discussion time and focused time for students to work on their own code, applying the lessons from the lectures; and 2) the presence of additional helpers outside of the primary instructors was quite beneficial to help answer specific questions from the students.

A few example quotes from the Winter School feedback form include:

“I really can’t say enough good things about this super empowering workshop! You did an amazing job identifying the things I didn’t know that I didn’t know, and teaching them at a level that was immediately actionable in my work.”

“Thank you so much to everyone for taking this amazing initiative to teach young scientists on software sustainability.”

“Thank you for putting together this winter-school, it was super useful to me and I’m looking forward to applying everything I learned to my future projects and to go deeper into the topics that were covered.”

2.6 Joint Activities

During the URSSI conceptualization process, we helped start two new activities that overlapped our goals, both so that we could promote these goals and also so that we could build up these future partners of a later full URSSI institute.

2.6.1 Research Software Alliance (ReSA)

ReSA was founded in 2018 to support recognition and valuing of research software as a fundamental and vital component of research worldwide, instigated by URSSI, the UK Software Sustainability Institute, and the Australian Research Data Commons. ReSA’s mission is to bring research software communities together to collaborate on the advancement of research software. Recent achievements include:

Development of research software guidelines for policy makers, funder, publishers and the research community for inclusion in the RDA COVID-19 Guidelines and Recommendations (???)

Inclusion of software in the draft revision of the OECD Committee for Science and Technology Policy’s Enhanced Access to Publicly Funded Data for Science, Technology and Innovation (which will become soft law) (TODO add link to final document after release)

Co-leadership of the FAIR 4 Research Software taskforce with FORCE11 and RDA to develop community-agreed principles and implementation guidelines

Support from the Gordon and Betty Moore Foundation to achieve ReSA’s goals

The Director of ReSA is Michelle Barker and the Steering Committee is comprised of:

- Neil Chue Hong, Director, Software Sustainability Institute, UK

- Catherine Jones, Software Engineering Group Leader, STFC, UK

- Daniel S. Katz, Assistant Director for Scientific Software and Applications, National Center for Supercomputing Applications (NCSA), University of Illinois, USA

- Chris Mentzel, Executive Director, Stanford University, USA

- Karthik Ram, URSSI PI, University of California, Berkeley, USA

- Andrew Treloar, Director, Platforms and Engagements, Australian Research Data Commons, Australia

2.6.2 Research Software Engineering (RSE)

In a 2012 workshop run by the UK SSI, a number of UK researchers who develop software realized that while they internally recognized a number of common elements to the work they did, along with others at their universities, there was no commonality to how others saw this work and these roles, and they needed to come together to build and develop a community. As stated by James Hetheringon, they decided they needed to “develop the profession of a scientific software engineer and the career track of software developers in academia.” The SSI studied academic job descriptions in the UK, and found about 10000, of which about 400 were related to software development, with 194 different job titles. To build recognition of the common software elements of these positions, the SSI chose the name “research software engineer”. The SSI then began publicizing this idea, building a community of people who identified with the role, leading to the formation of the UK RSE Association, which is now the Society of Research Software Engineering. The SSI and RSE Association/Society encouraged people who were performing RSE-like work to also identify with this title, built RSE groups in UK universities, encouraged universities to change their job titles, held workshops and then conferences for RSEs, encouraged a funding agency to provide fellowships to RSEs, and built up the association/society, which now has about 2000 members (???).

As a number of non-UK people (including some URSSI PIs) started attending UK RSE conferences, the SSI also supported activities to grow the international community. These self-developed and SSI-supported activities have led to RSE groups in Germany, the Netherlands, the Nordic countries, the US, Canada, Australia/New Zealand, South Africa, and Belgium, some of which have now held national workshops and conferences. The US Research Software Engineer Association (US-RSE) formed in 2018, with the support and participation of three of the five URSSI PIs, and has 420 members as of May 2020. The US-RSE Association is centered around three main goals:

Community: to provide a coherent association of those who identify with the role (not necessarily title) of Research Software Engineer, and to provide the members of the community the ability to share knowledge, professional connections, and resources.

Advocacy: to promote RSEs’ impact on research, highlighting the increasingly critical and valuable role RSEs serve.

Resources: to provide useful resources to multiple demographics, including technical and career development resources, and information and material to support the establishment and expansion of RSE positions and groups within the research ecosystem.

There are thus some overlaps between US-RSE’s goals and URSSI’s and a clear reason for us to work together going forward.

2.7 Synthesis of URSSI Activities

In the following section we describe common challenges and dilemmas we identified across URSSI’s conceptualization activities. Where possible, we identify the role URSSI could play in helping overcome these challenges as a funded institute.

Challenge 1: Decentralized expertise

Throughout the planning activities, the community of stakeholders recognized that there already exist a number of individual efforts to help improve the development, maintenance, and overall sustainability of research software in the US. While these efforts, collectively, are comprehensive in their scope, the decentralized structure of this expertise is inefficient for both resource discovery and delivery of services. For example there are many points of expertise in research software development (e.g., SWEBOK, RSEs), education and training (e.g., academic initiatives, Carpentries), credit and metrics (e.g., CiteAs), incubation (e.g., ESIP, the UW eScience Institute) that are available to individuals at specific institutions. However, there is currently no comprehensive organization that can serve to coordinate these activities, promote centers of expertise, and ensure that effort is not duplicated– a role that is played admirably in the UK by the Software Sustainability Institute.

As a coordinating center, URSSI could help solve this challenge in the following ways:

Broker connections between points of expertise and serve to coordinate efforts and funding such that different experts could better collaborate on sustainable development, education, and policy initiatives.

Identify and convene experts to help fill gaps that exist between service delivery, for example, where the Carpentries see gaps in knowledge skill or acquisition in sustaining software education.

Disseminate best practices from specific disciplines to the broader research community. By acting as a center of research software excellence URSSI could be a space where solutions from one domain could be learned and efficiently transferred to another.

Coordinate and help to curate relevant research software packages through discovery portals, such as https://libraries.io/

Challenge 2: Pathways to sustainability for non-commercial research software

Throughout the URSSI conceptualization activities, participants voiced concern for the viability of valuable software projects that do not seek commercialization. Technology transfer programs at universities and through research funding agencies (e.g. I-Corps, SBIR/STTR) are well established and have been successful at helping entrepreneurially-focused software projects recognize and execute viable business models. This transition pathway is promoted for software that has a potential to sustain itself through fees or licensing agreements. However, there is no equivalent university guidance for software projects that would like to pursue non-commercial open source models for sustainability.

There is a need for a US-based institute, divorced from any single university, to help valuable research software projects realize a non-commercial open-source route to sustainability. This support could include a program, such as an incubator, that would guide research software projects towards developing open-source governance, budgeting, licensing, and fiscal sponsorship in service of non-commercial sustainability. We view this route, and its guidance from an institute, as critical to promoting an ecosystem of diverse research software projects that may not be readily amenable to commercialization, or have the potential to build healthy and viable volunteer developer communities as an alternative to licensing.

Challenge 3: Coordinated advocacy and analysis for policy change

Related to the first challenge of decentralized expertise, there is a need for coordination and targeted leadership around policy for sustainable research software. Participants in URSSI workshops described a need for coordinating communities around emerging national, institutional, and even disciplinary specific policies that have a downstream impact on sustainability, such as software citation principles, tenure and promotion guidelines that recognize research software contributions, sustained funding or financial support for research software maintenance, and software management plans that explicitly document expectations around software development and archiving.

Currently, community members take on many of these policy activities as additional professional service, or as volunteer work. This secondary focus on any one policy issue, in turn, leads to slow progress, high turnover of volunteers, and does not allow any one person or institution to develop the deep expertise needed for effective sustained analysis or advocacy.

An institute that could dedicate time and attention to policy design, invest personnel time in developing needed expertise, and coordinate a sustainable lobbying network would allow advocacy work to become more manageable, effective, and result in better outcomes for research software stakeholders. Further, there is often a need to conduct advocacy work driven by empirical research that can substantiate how or why one policy decision is better than another. An institute dedicated to these activities would facilitate data-driven policy research that could lead to stronger advocacy positions, and benefit funding agencies, universities, and research institutions that seek to adopt new policy aimed at improving research software sustainability.

Challenge 4: Signals of viability

A valuable contribution of the URSSI workshop and engagement activities has been convening stakeholders with expertise in the evaluation and funding of research software. These stakeholders include current and former program officers from NSF, NIH, DoE, DoD, and IMLS, as well as philanthropic organizations like the Gordon and Betty Moore and Alfred P. Sloan foundations. These participants have a collective desire for an institute to develop stronger signals of a research software project’s viability; to help evaluate a project’s potential for long-term sustainability; and help communities of research software stakeholders coalesce around strategic funding initiatives that could benefit research through software investments.

A dedicated US institute focused on software sustainability could help meet this challenge in a number of ways, but we recognize that there is no overarching or simple solution. The plan we describe below explains, in numerous places, how URSSI can and should convene different communities to address complex problems like strategic financial investment, evaluation for viability, etc.

Challenge 5: Career Advancement and Credit

Closely related to Challenges 1, 3 and 4 is a desire of URSSI stakeholders to see an institute dedicate time and resources towards promoting recognition for research software activities and helping to shape reliable career pathways for research software engineers (RSEs). At URSSI workshops, there was near-unanimous agreement that the acknowledgement of research software contributions remains difficult for career advancement and there is a lack of clear guidance for both universities and funding agencies on how to make progress on these issues.

A dedicated US-based institute for research software sustainability could play a leading role in advocating for best practices in the measurement, reward, and credit for research software activities. This activity would include dedicated research into measuring and evaluating software development activities, advancing techniques for use and user impact evaluation, and promoting formative metrics that are conducive to long-term viability. Relatedly, a dedicated US-based institute could better evaluate and promote the impact of research software engineering positions that currently exist and advocate for their creation where they do not.

In the next chapter, we describe URSSI’s plan to meet each of these challenges. In particular, we focus on how a strategic investment in a US-based software sustainability institute would practically organize itself, allocate budgets, and measure progress on each of the challenges identified in the conceptualization phase.